AMD EPYC Milan Review Part 2: Testing 8 to 64 Cores in a Production Platform

by Andrei Frumusanu on June 25, 2021 9:30 AM ESTSPEC - Multi-Threaded Performance - Subscores

Picking up from the power efficiency discussion, let’s dive directly into the multi-threaded SPEC results. As usual, because these are not officially submitted scores to SPEC, we’re labelling the results as “estimates” as per the SPEC rules and license.

We compile the binaries with GCC 10.2 on their respective platforms, with simple -Ofast optimisation flags and relevant architecture and machine tuning flags (-march/-mtune=Neoverse-n1 ; -march/-mtune=skylake-avx512 ; -march/-mtune=znver2 (for Zen3 as well due to GCC 10.2 not having znver3).

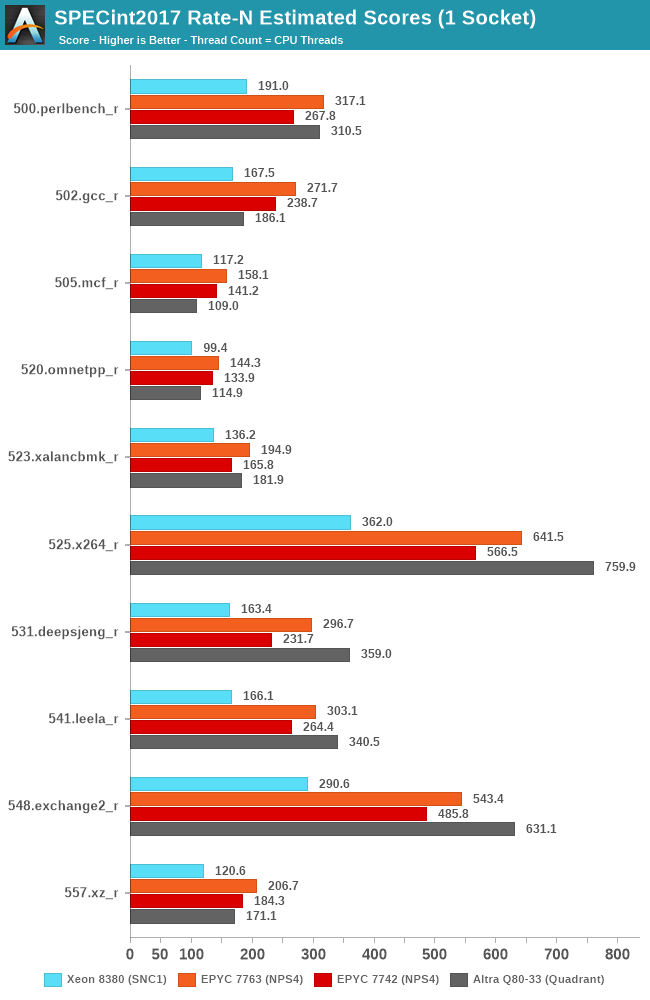

I’ll be going over two chart comparisons, first of all, with the respective flagship parts, consisting of the new EPYC 7763 numbers, pitted against Intel’s 40-core Xeon Ice Lake SP and Ampere’s Altra Q80-33, along with the figures we have on AMD’s EPYC 7742. It’s to be noted that this latter is a 225W part, compared to the 280W 7763.

In SPECint2017, the EPYC 7763 extends its lead over Intel’s current best CPU, improving the numbers beyond what we had originally published in our April review. While AMD also further narrows the gap to Ampere’s 80-core Altra SKU, there are still many core-bound workloads that still notably favour the Neoverse N1 part given its 25% advantage in core count.

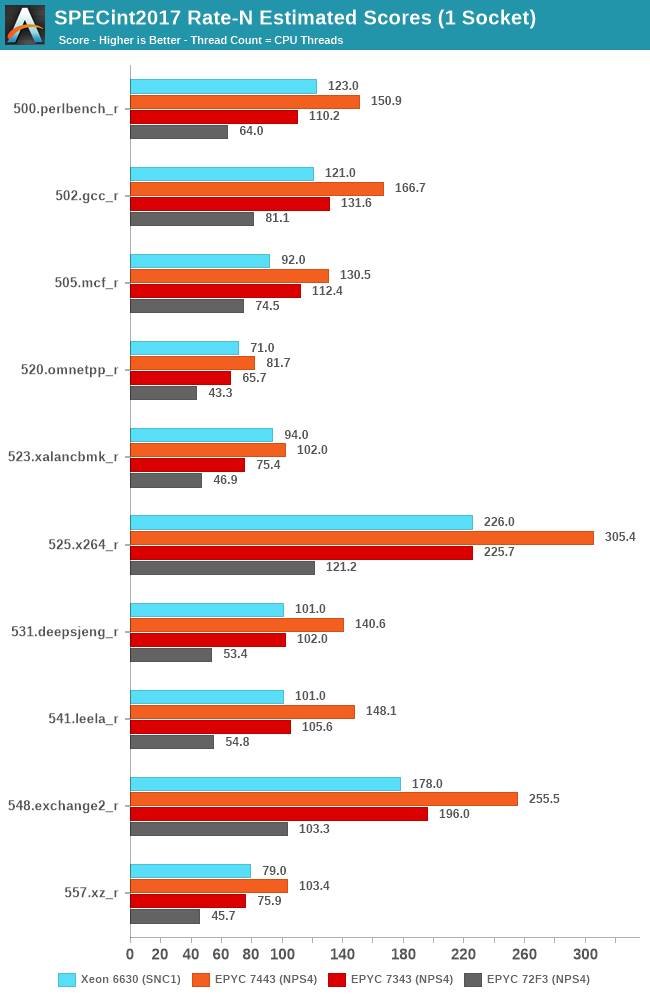

Also in SPECint2017, but this time focusing on the mid-tier SKUs, the main comparison points that are interesting here is the new 24-core EPYC 7443 and 16-core EPYC 7343 against the new 28-core Xeon 6330. What’s shocking here, is that Intel’s new Ice Lake SP server chip has troubles not only competing against AMD’s 24-core chip, but actually even struggles to differentiate itself from AMD’s 16-core chip, which is quite shocking.

The 72F3 8-core part is interesting, but generally we have troubles to competitively place such a SKU given that we don’t have a comparable part from the competition to pit against it.

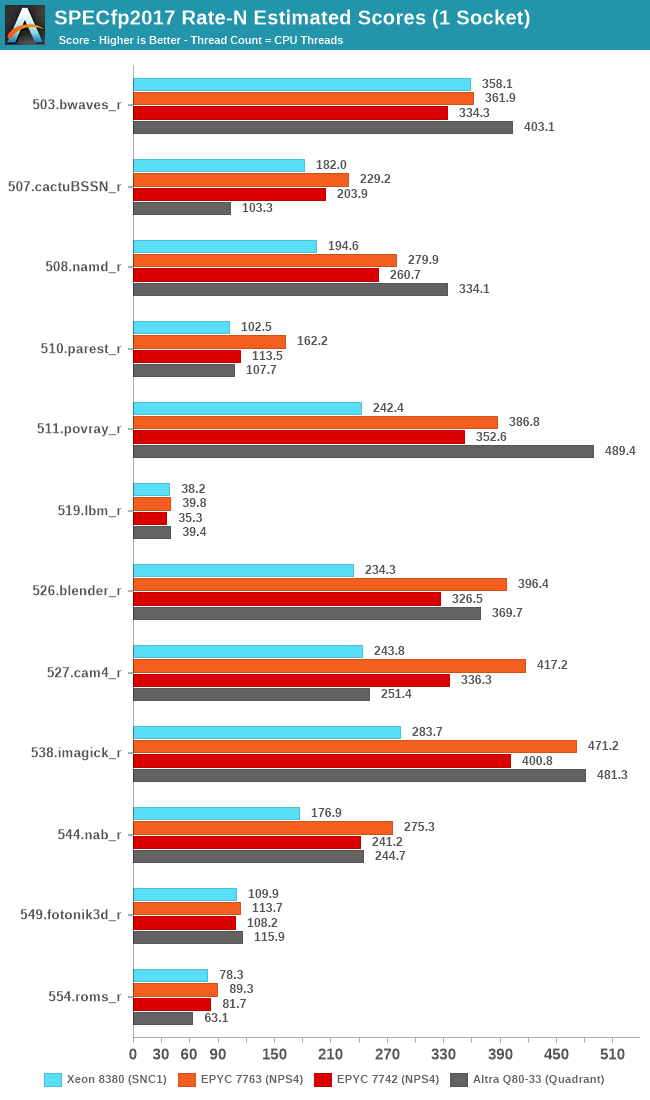

For the high-end SKUs, we again see the 7763 increase its performance positioning compared to what we had review a few months ago, although with fewer large performance boost outliers, due o the memory-heavy nature of the floating-point test suite.

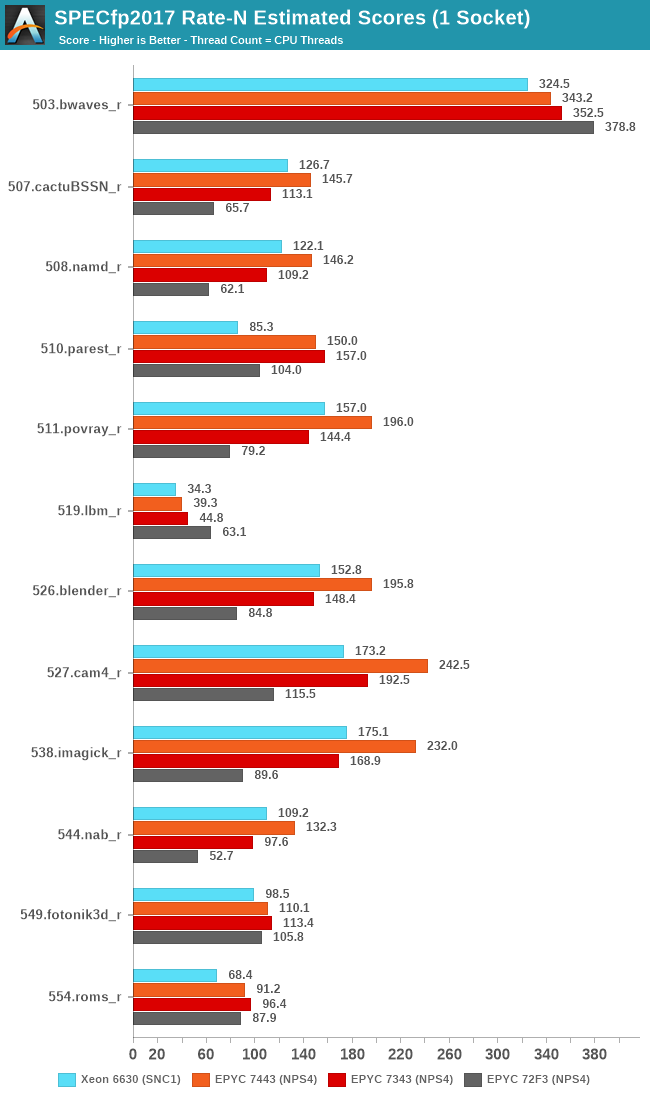

In the low-end SKUs, we see a similar story as in the integer suite, where AMD’s 16-core 7343 battles it out against Intel’s 28-core Xeon, while the 24-core unit is comfortable a good margin ahead of the competition.

The 72F3 showcases some interesting score here – because there’s more workloads that are fundamentally memory bound; the actual core count deficit of this SKU doesn’t really hamper its performance compared to its siblings. If anything, the lower core count actually has some positive side-effects as it results in less cache and DRAM contention, resulting in less overhead and actually higher performance than the higher core count parts. Theoretically you could mimic this with the higher core count parts by simply running fewer workload instances and threads, but if a system deployment would be running workloads that are more typical of such performance characteristics, the low-core count 72F3 could make sense.

58 Comments

View All Comments

eastcoast_pete - Friday, June 25, 2021 - link

Interesting CPUs. Regarding the 72F3, the part for highest per-core performance: Dating myself here, but I recall working with an Oracle DB that was licensed/billed on a per-core or per-thread basis (forgot which one it was). Is that still the case, and what other programs (still) use that licensing approach?And, just to add perspective: the annual payments for that Oracle license dwarfed the price of the CPU it ran on, so yes, such processors can have their place.

flgt - Friday, June 25, 2021 - link

More workstation than server, but companies like Ansys still require additional license packs to go beyond 4 cores with some of their tools, and they often come with hefty 5-figure price tags depending on the program and your organizations bargaining ability.RollingCamel - Friday, June 25, 2021 - link

It was refreshing to see Midas NFX running without core limitations.The core limitations archaic and doesn't represent the current development. Unless the license policies has evolved in the past years.

realbabilu - Monday, June 28, 2021 - link

Interesting to see The fea implementation with latest math kernel available like midas nfx bench in anandtech. Hopefullly anandteam got demos from midas korea for testing. Abaqus, msc nastran, inventor fea anything will do.However i dont think midas improved their math kernel, i had midas civil and gts licensed, but cant use all threads and all memory i had On my pc, like others fea dis.

mrvco - Friday, June 25, 2021 - link

Power unit pricing! LOL, the dreaded Oracle audit when they needed to find a way to make their quarterly numbers!?!?!eek2121 - Friday, June 25, 2021 - link

It blows my mind that people still use Oracle products.Urbanfox - Sunday, June 27, 2021 - link

For a lot of things there isn't a viable alternative. The hotel industry is a great example of this with Opera and Micros.phr3dly - Friday, June 25, 2021 - link

In the EDA world we pay per-process licensing. As with your scenario, the license cost dwarfs the CPU cost, particularly over the 3-year lifespan of a server. You might easily spend 50x the cost of the server on the licenses, depending on the number of cores. Trying to optimize core speed/core count/eventual server load against license cost is a fun optimization problem.So yeah, the CPU cost is irrelevant. I'm happy to pay an extra several thousand dollars for a moderate performance improvement.

eldakka - Saturday, June 26, 2021 - link

> Oracle DB that was licensed/billed on a per-core or per-thread basis (forgot which one it was). Is that still the case, and what other programs (still) use that licensing approach?Lots of Enterprise applications still use that approach, Oracle (not just DB), IBM products - WebSphere Application Server (all flavours, standalone, ND, Liberty, etc.), messaging products like WebSphere MQ, I believe SAP uses it, many RedHat 'middleware' products (e.g. JBOSS web server, EAS, etc.) use it as well.

In the enterprise space, it is basically the expected licensing model.

And the licensing cost per-core is usually 'generation' dependant. So you usually just can't upgrade from, say, a 20-core Xeon 6th-gen to a 20-core 8th gen and expect to pay the same.

The typical model is a 'PVU', Processor Value Unit (different companies may give it a different label - and value different processors differently, but it usually boils down to the same thing). Each platform-generation (as decided by the software vendor, i.e. Oracle, or IBM, etc.) has a certain PVU per core. E.g., (making up numbers here) a POWER8 (2014ish release) might have a PVU of 4000, and an Intel Haswell/Ivy Bridge - E5/7 v2/3 I think (2014/15ish)- might be given 3500. So if using an 8-core P8 LPAR that'd be 32000 PVU, while an 8 core VI on E7 v3 would be 28000. And P9 might be 7000 PVU, a Milan might be 6000 PVU, so for 8-cores it'd be 56000 or 48000 respectively. Then there will be a doller per PVU multiplier based on the software, so software bob could be x1 per year, so in the P9 case $56k/year license, whereas software fred mught be a x4 multiplier, so $224k/year. And yes, there can be instances where software being run on a single server (not even necessarily a beefy server) can be $millions/year licensing. If some piece of software is critical, but low CPU/memory requirements, such as an API-layer running on x86 hardware that allows midrange-based apps to interface directly to mainframe apps, they can charge millions for it even if its only using combined 8-cores across 4 2-core server instances (for HA) - though in this case, where they know it requires tiny resources, they'll switch to a per-instance rather than a per-core pricing model, and charge $500k per instance for example.

The per-core PVU can even change within a generation depending on specific CPU feature sets, e.g. the 2TB per-socket limited Xeon might be 5000 PVU, but the 4TB per-socket SKU of the same generation might be 6000 PVU, just because the vendor thinks "they need lots of memory, therefore they are working on big data sets, well, obviously, that's more money!" they want their tithe.

Oh, and nearly forgot to mention, the PVU can even change depending on whether the software vendor is tring to push their own hardware. IBM might give a 'PVU' discount if you run their IBM products on IBM Power hardware versus another vendors hardware, to try and push Power sales. So even though, in theory, a P9 might be more PVU than a Xeon, but since you are running IBM DB2 on the P9, they'll charge you less money for what is conceptually a higher PVU than on say a Xeon, to nudge you towards IBM hardware. Oracle has been known to do this with their aquired SPARC (Sun)-based hardware too.

eastcoast_pete - Saturday, June 26, 2021 - link

Thanks! I must say that I am glad I don't have to deal with that aspect of computing anymore. I also wonder if people have analyzed just how severely these pricing schemes have blunted or prevented advancements in hardware capabilities?